在物联网应用中,边缘计算、人工智能和机器学习正在兴起。这些技术已经从研究和原型阶段发展起来,目前正在许多不同行业的实际应用案例中部署。边缘计算和人工智能的共生特性特别有趣,因为人工智能需要极快的数据处理速度,而边缘计算可以实现这一点;同时,人工智能可以在边缘实现更高性能的计算资源和智能。

在本文中,我们将探讨边缘计算 (EC)、人工智能 (AI) 和机器学习 (ML),以及这种组合如何改变网络基础设施、实现新的使用案例并创造下一代数据处理。

引领边缘计算的数据中心范式转变

数据中心集中了一个组织的 IT 操作和设备。它容纳计算机系统和相关组件,如电信和存储系统。通常还包括冗余电源系统、数据通信连接、环境控制和安全装置。

数据中心集中了一个组织的 IT 操作和设备。它容纳计算机系统和相关组件,如电信和存储系统。通常还包括冗余电源系统、数据通信连接、环境控制和安全装置。

在过去十年中,数据中心的作用和组成发生了重大变化,并将继续发展。过去,建设数据中心是一项长期的任务,电力/冷却效率低下,布线缺乏灵活性,数据中心内部或之间没有移动性。如今的数据中心讲究速度、性能和效率。

与分散的本地硬件相比,数据中心计算历来具有优势。数据中心的计算成本相对较低,而且可以按需处理海量信息。然而,数据中心也并非完美无缺。其中一个主要缺点是,数据必须发送到一个集中地点进行处理,然后再发回以显示结果或采取行动。这种在有限带宽链路上的来回通信往往会降低应用程序的运行速度。我们都知道,在加载一个托管在远程数据中心的网站时会遇到这种情况。

随着下一代 5G 蜂窝网络在全球的部署、 边缘计算如今,机器学习和人工智能已开始流行起来。边缘计算在本地、就近或就在数据产生的地方处理数据。这样就无需在边缘设备和集中式数据中心之间来回发送大量信息。

边缘智能

实现边缘智能(或称边缘人工智能)的关键因素之一是结构紧凑、价格低廉且功能强大的硬件。几年前,要在本地运行人工智能是不可能的,因为硬件的大小和成本会让人望而却步。然而,随着摩尔定律不断得到验证,计算能力变得越来越便宜,本地化人工智能现在已成为现实。事实上,它正变得如此流行,以至于咨询公司 德勤预测 仅在 2020 年,就将有 7.5 亿个边缘人工智能芯片内置到设备中。德勤还认为,这一数字还将继续增长,预计到 2024 年将售出 15 亿个边缘人工智能芯片。

实现边缘智能(或称边缘人工智能)的关键因素之一是结构紧凑、价格低廉且功能强大的硬件。几年前,要在本地运行人工智能是不可能的,因为硬件的大小和成本会让人望而却步。然而,随着摩尔定律不断得到验证,计算能力变得越来越便宜,本地化人工智能现在已成为现实。事实上,它正变得如此流行,以至于咨询公司 德勤预测 仅在 2020 年,就将有 7.5 亿个边缘人工智能芯片内置到设备中。德勤还认为,这一数字还将继续增长,预计到 2024 年将售出 15 亿个边缘人工智能芯片。

除了对这些数字进行抽象分析外,看看边缘人工智能如何应用的真实案例也会有所启发。计算机芯片制造商英伟达(NVIDIA)正在把 监控摄像头中的 GPU.GPU 允许摄像机运行识别软件,而无需将视频流传输回数据中心进行处理。在智能城市中,可能会有成千上万个这样的摄像头,无需将所有数据流传输到数据中心,可以大大节约成本。这些支持人工智能的摄像头不仅可以执行识别任务,还能利用其本地智能帮助管理交通,并执行与城市相关的其他高级功能。 智慧城市这是我们 Digi 热衷的另一个话题。

Siri 和 Alexa 是采用边缘人工智能的另外两个有趣的例子。这些语音识别平台没有使用本地硬件,而是利用了边缘网络。由于边缘网络本质上是由多个较小的分布式数据中心组成,因此信息不必经过长途跋涉就能得到处理。对于苹果或亚马逊这样的大公司来说,边缘网络是可行的。不过,对于小公司来说,本地化边缘计算与人工智能相结合,能以合理的成本提供最佳服务。

边缘人工智能的优势

边缘人工智能的主要优势之一是速度。如果数据不需要来回传输处理,任何任务或行动都可以更快地完成。另一个好处是,通过集成智能设备和分析功能,在边缘部署智能以快速洞察,从而能够发现问题。

人工智能与边缘计算完美结合,可识别可能导致系统故障的问题,并迅速将数据传送给能够快速处理问题的人员。

语音识别越来越依赖于边缘人工智能,尤其是当消费者希望立即得到答复时。还有一些工业用途,支持人工智能的摄像头和其他传感器可以监控生产并进行调整,而无需连接到中央处理器。

这就引出了另一个重要问题,即边缘人工智能可以在没有 网络连接的情况 下发挥作用。在网络连接中断的情况下,边缘设备可以继续正常运行,例如控制繁忙十字路口的交通灯。

有一种误解认为,边缘计算将最终取代云计算,但事实并不一定如此。仍有一些计算密集型任务需要数据中心。本地化人工智能的好处在于,它可以通过编程过滤数据,从而只将必要的信息传输到云端。也就是说,人工智能可以确保只传输相关数据,而不是将所有本地数据从设备发送到云端。这不仅可以节省带宽,还可以节省传输无关数据的费用。由人工智能管理的边缘硬件处理能力越强,数据中心必须进行的处理就越少。

总之,边缘人工智能计算具有以下优势:

- 更低的延迟处理(更快的速度)

- 预测性洞察力可实现主动和先发制人的故障排除

- 正常运行时间更长,因为即使没有网络连接也能进行信息处理

- 从无关数据中本地过滤相关数据

人工智能和边缘计算的使用案例

人工智能之所以重要,是因为它是实现边缘高水平决策的技术。如果功能有限,边缘计算就不会兴起。然而,由于人工智能可以在边缘实现如此之多的流程,因此减少了对集中计算能力的需求。

边缘人工智能与决策

人工智能的一个有趣特点是,它可以被赋予决策权。一个很好的例子是用于生产设施安全的智能摄像头。如果摄像头发现有员工处于危险区域,或存在其他潜在的危险障碍物,支持人工智能的摄像头就能关闭该区域内运行的所有机器。

人工智能的一个有趣特点是,它可以被赋予决策权。一个很好的例子是用于生产设施安全的智能摄像头。如果摄像头发现有员工处于危险区域,或存在其他潜在的危险障碍物,支持人工智能的摄像头就能关闭该区域内运行的所有机器。

人工智能摄像头还能决定将哪些数据转发给人工操作员。例如,办公楼里的人工智能摄像头可以通过编程识别在那里工作的每个人的面孔。如果摄像头发现不认识的人,它就会向保安发出警报。在监控人流方面,这要比让一名保安全天候 "监视 "一个摄像头(或 12 个摄像头)、寻找可疑行为更有效。随着IoT 基础设施在家庭和工作场所的扩展,支持人工智能的智能设备有望将功能提升到一个新的水平。

当边缘人工智能成为关键任务时

使用案例实际上非常广泛,涵盖了多种多样的行业和未来应用。正如我们已经讨论过的,边缘人工智能检测和报告故障先决条件的能力对于预测性维护和关键任务应用中的关键决策都具有巨大的意义。例如,考虑到储罐、采矿带和能源系统等远程资产,它们每离线维护一个小时就会损失数十万美元,或者在关键问题未被识别的情况下,实际上有可能发生火灾、爆炸或熔毁。

使用案例实际上非常广泛,涵盖了多种多样的行业和未来应用。正如我们已经讨论过的,边缘人工智能检测和报告故障先决条件的能力对于预测性维护和关键任务应用中的关键决策都具有巨大的意义。例如,考虑到储罐、采矿带和能源系统等远程资产,它们每离线维护一个小时就会损失数十万美元,或者在关键问题未被识别的情况下,实际上有可能发生火灾、爆炸或熔毁。

边缘人工智能、机器学习、5G 和自动驾驶汽车的未来

虽然社会可能对自动驾驶汽车充满渴望,但在这一现实完全实现之前,还有更多的系统和技术必须成熟。例如,识别横穿马路的物体、路况的突然变化以及路边路标的出现都非常重要。人工智能、机器学习和高速 5G 网络都将通过支持这些关键部分来实现自动驾驶汽车。例如,车辆必须能够实时识别道路施工人员举起的 "停止、慢行或让行 "标志,并根据这一信息采取行动。

虽然社会可能对自动驾驶汽车充满渴望,但在这一现实完全实现之前,还有更多的系统和技术必须成熟。例如,识别横穿马路的物体、路况的突然变化以及路边路标的出现都非常重要。人工智能、机器学习和高速 5G 网络都将通过支持这些关键部分来实现自动驾驶汽车。例如,车辆必须能够实时识别道路施工人员举起的 "停止、慢行或让行 "标志,并根据这一信息采取行动。

边缘计算和人工智能安全吗?

使用安全的嵌入式解决方案(如 Digi ConnectCore i.MX 8 模块并部署在安全设备上,如 Digi IX20.您希望与一家非常重视IoT 安全性的设备制造商合作,并将安全性集成到其解决方案中,以支持您的设备。 多层安全方法 在已部署的应用程序中。

使用安全的嵌入式解决方案(如 Digi ConnectCore i.MX 8 模块并部署在安全设备上,如 Digi IX20.您希望与一家非常重视IoT 安全性的设备制造商合作,并将安全性集成到其解决方案中,以支持您的设备。 多层安全方法 在已部署的应用程序中。

在边缘计算中,大部分数据都是在本地处理的。与将数据发送到数据中心、存储一段未知时间、处理并发送回设备相比,这些数据被泄露的风险更小。如果边缘设备和边缘设备连接的本地网络是安全的,并受到防火墙的良好保护,那么数据就是安全的。

不过,就安全可能受到损害的地方而言,有几个因素需要考虑。

- 边缘设备可能无法如期获得更新。重要的是,要从定期更新的制造商处购买设备,然后监控设备的安全性,了解行业内有关安全威胁的知识,并积极主动地使边缘设备符合规定--这是Digi Remote Manager® 的一项重要功能。

- 由于边缘设备可以随时购买,黑客可以很容易地购买设备来寻找漏洞。关注行业新闻并随时了解特定设备中发现的任何漏洞非常重要。请注意,Digi 拥有一个安全中心,对于构建或部署IoT 解决方案的人员来说,这是一个宝贵的资源。

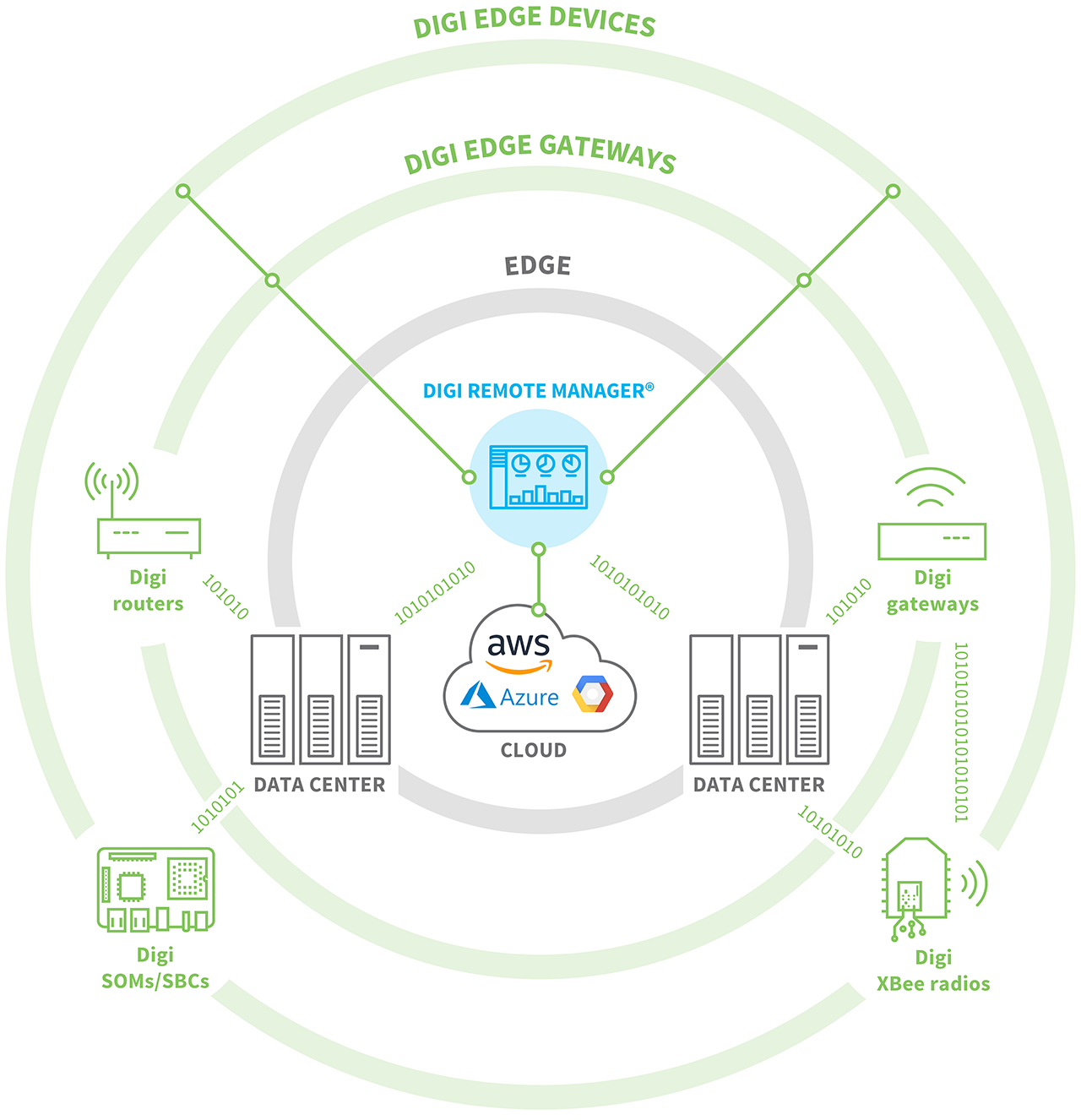

Digi 解决方案与边缘计算和人工智能的结合点在哪里?

作为早在物联网时代就提供IoT 解决方案的供应商,Digi 数十年来一直在帮助客户解决从数据中心到边缘的数据连接难题。我们的蜂窝网关和路由器解决方案为包括传感器、控制器和 RTU 在内的边缘节点提供关键连接,并以关键任务应用中识别和路由关键数据所需的速度进行处理。在我们的博文中了解更多信息、 什么是边缘计算?

作为早在物联网时代就提供IoT 解决方案的供应商,Digi 数十年来一直在帮助客户解决从数据中心到边缘的数据连接难题。我们的蜂窝网关和路由器解决方案为包括传感器、控制器和 RTU 在内的边缘节点提供关键连接,并以关键任务应用中识别和路由关键数据所需的速度进行处理。在我们的博文中了解更多信息、 什么是边缘计算?

Digi 产品还可通过 Python 集成、BASH 脚本甚至本地 C Linux 应用程序进行编程,使开发人员能够建立节点内处理,并在边缘定义高度复杂的处理和智能。Digi 边缘路由器和网关还支持边缘节点聚合,以实现进一步处理。然后,边缘设备可用于托管客户应用程序,根据特定应用程序的需要进行进一步的边缘处理。了解更多 边缘计算网页.

此外,开发人员拥有一整套开发人员资源,可利用Digi ConnectCore 和Digi XBee 解决方案设计和构建最复杂、高性能、低延迟的应用程序。 每个生态系统都提供完整的文档、代码库和内置安全性,同时还与 Digi 的远程管理解决方案Digi Remote Manager 集成。在我们的博客文章中,您可以了解更多有关 Digi 面向当前和未来人工智能、机器学习和机器视觉应用的嵌入式解决方案的信息、 实时边缘处理让机器学习和机器视觉工作得更好.

最后 Digi 无线设计服务 可以成为您确定边缘人工智能解决方案的关键要求、架构和组件的资源。这一流程可以帮助您的团队在整个开发过程中做出关键决策,包括以下任何或所有方面:

- 进行权衡分析,优化设计的每个方面。

- 提供工程支持,增强工程团队的实力。

- 确保不遗漏任何关键的集成或互操作性要求(如安全性、延迟、带宽、处理速度、电池、认证、数据可视化)。

- 就如何设计、构建和部署解决方案提供指导,以实现最佳效率、功能和成本节约。

Digi WDS 在边缘计算和人工智能系统的设计和工程设计的各个方面都拥有丰富的经验,无论您只是需要一些咨询,还是希望增强您的工程团队,Digi WDS 都能为您的项目提供支持,以确保您的项目实现所有关键目标,包括认证和上市时间。

边缘计算的未来

根据 IoT 商业新闻"数据每传输 100 英里,就会损失大约 0.82 毫秒的速度"。这很快就会导致大量延迟。人工智能支持的边缘计算解决了这一问题。由于所有处理都在现场进行,因此延迟被消除了。或者,在本地处理能力不足的情况下,人工智能可以决定将相关信息发送到数据中心,而将无关数据保留在本地驱动器上。

根据 IoT 商业新闻"数据每传输 100 英里,就会损失大约 0.82 毫秒的速度"。这很快就会导致大量延迟。人工智能支持的边缘计算解决了这一问题。由于所有处理都在现场进行,因此延迟被消除了。或者,在本地处理能力不足的情况下,人工智能可以决定将相关信息发送到数据中心,而将无关数据保留在本地驱动器上。

回顾 作者:Gartner 发现,截至 2018 年,仅有 10% 的数据是在边缘处理的。然而,Gartner 预计,到 2025 年,75% 的数据处理将在边缘完成。这是一个巨大的转变,它得益于日益强大的硬件和智能人工智能系统,这些系统可以比以往任何时候都更快地处理信息、进行跨网络通信并在本地瞬间做出决策。

更重要的是,如今正在部署的 5G,为开发和部署需要以几分之一秒为单位进行数据传输的高速、低延迟应用提供了机会,我们即将全面实现人工智能和边缘计算。

您的计划是什么,边缘人工智能能提供哪些帮助?Digi 专家可以与您一起确定下一步计划,设计和构建您的解决方案,利用边缘计算、机器学习和 5G 的下一代优势,发挥最新技术的威力。

联系我们 开始对话!