在本博文中,我们将向您介绍

Digi ConnectCore® 语音控制中的新解决方案

Digi 的嵌入式解决方案系列 该技术可在网络边缘的设备上进行语音处理,无需云连接。如今,产品设计中的语音集成之所以备受关注,而且随着各垂直行业的应用集成交互式语音识别功能,我们将看到这一领域的增长,原因是多方面的。

易用性往往能使成功的产品在市场上脱颖而出。对于构建具有嵌入式计算功能的解决方案的原始设备制造商来说,这通常归结为创建一个直观、用户友好的产品界面。而没有比语音控制设备操作更友好的界面了。

语音控制的好处包括更卫生、人机互动更迅速、操作更精确等。边缘处理可降低连接成本和数据隐私问题,同时提供比云端语音处理更快的响应时间。

让人类和机器站在同一起跑线上

许多带有嵌入式计算功能的产品都需要用户输入,并显示必须由设备用户理解或执行的信息。这部分产品功能被称为人机界面(HMI)。如今,人机界面通常通过显示屏来提供,用户输入方法也从按钮、鼠标和键盘发展到模仿智能手机操作的触摸屏。

到 2022 年,大多数用户都希望电子产品拥有类似智能手机的界面。但对于原始设备制造商来说,在嵌入式 Linux 中开发这种界面既困难又昂贵,需要优秀的用户界面开发人员和额外的图形用户界面(GUI)软件工具来构建。虽然软件可能是开源的,但功能更强大的工具通常需要购买开发环境和设备许可。

此外,成品触摸屏硬件价格昂贵,大大增加了嵌入式产品的材料清单(BOM)成本。玻璃显示屏在工业环境中的日常使用中很容易破碎或损坏,需要昂贵的维修或更换费用。在医疗和食品行业,设备制造商面临的另一个问题是卫生因素和表面细菌在用户之间传播的问题。

最后,大多数为智能手机市场设计的触摸/显示屏产品无法达到商业或工业产品预期的长使用寿命(10 年以上)。

语音控制--理想的人机界面

语音控制是解决这些问题的理想方法。语音控制设备允许用户在远距离与设备进行交互,即使他们看不到正在交互的设备。这意味着他们可以将注意力集中在手头的任务上,而不是设备上。

语音也是一种非常高效的数据输入方式。大多数人的说话速度约为每分钟 150 个字,而平均打字速度为 40 个字。这两个优势结合起来,可以让用户快速提出相对复杂的请求。

语音控制在工业应用中具有显著的优势,例如,它可以提高用户的安全性,让他们可以专注于最终任务,而不是通过触摸交互来控制设备。在手术室等医疗环境中,语音控制设备可以实现无触摸交互,有助于避免细菌传播。

Digi ConnectCore 语音控制介绍

Digi ConnectCore 语音控制是一种即用型软件解决方案,已预集成到 Digi Embedded Yocto 中,可与Digi ConnectCore 系列系统模块(SOM)一起使用。ConnectCore Voice Control 提供实时语音识别和文本到语音功能,可自定义唤醒词,可自定义 60,000 个词汇,支持 30 种国家语言。

ConnectCore Voice Control 可在IoT 边缘为任何带有Digi ConnectCore 模块的设备提供完整的语音处理功能,实现用户与设备的零接触交互。它不需要基于硬件的 AI/ML 加速器即可运行,因此产品开发人员除了现成的麦克风和扬声器外,无需额外的硬件成本即可添加语音功能。

边缘语音处理效果更佳

为什么要在IoT 边缘进行处理?当您使用苹果 Siri 或亚马逊 Alexa 等流行的消费类语音控制应用程序时,您可能已经注意到了交互过程中的轻微延迟,即使设备就在您的手中或厨房的台面上。造成这种延迟的原因是,几乎所有消费类语音应用程序背后的计算机处理都是在云端进行的。

如果你正在选择一首歌曲或发送一条短信,几十分之一秒的延迟可能不是问题,但在信息流中或进行精确调整时,这种延迟会使语音控制的效果大打折扣。毋庸置疑,云连接的任何中断都会使问题更加严重。

然而,ConnectCore Voice Control 在本地边缘执行语音处理,可实现实时性能,反应时间小于 100 毫秒。ConnectCore Voice Control 的设备上语音处理可实现实时响应,而在云中使用语音处理则会出现不同的延迟。它还消除了基于云的解决方案的连接成本。

30 种语言,60,000 字

市场上大多数语音控制应用程序只能使用两种语言--英语和中文普通话。ConnectCore Voice 能够以 30 种国家语言进行交流,这为开发面向全球部署的产品提供了巨大优势。

在本地处理数据实际上消除了通过网络向云服务传输数据时出现的隐私和安全问题。由于无需连接互联网,因此可以保护数据隐私。ConnectCore 语音控制符合欧盟《通用数据保护条例》(GDPR),这是全球部署的另一个关键优势。

语音控制使用案例

语音控制在各种使用场景中都是一项宝贵的功能。考虑到大多数人每分钟大约说 150 个字,而平均打字速度为每分钟 40 个字,因此在一系列人机交互场景中,提高速度和精度具有巨大的价值。下面是一些例子:

- 智慧城市和零售业

- 停车计时器

- 信息亭或终端,提供导向或活动信息

- 自动售货机

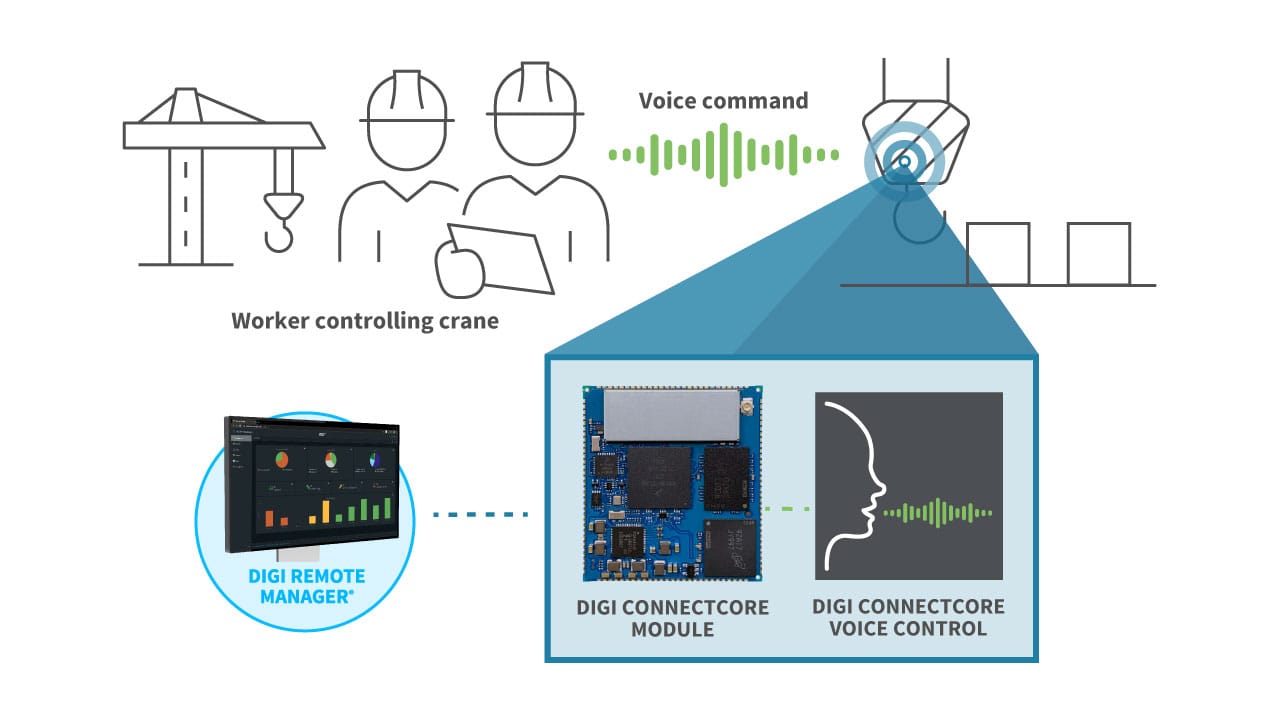

- 工业运行

- 通过语音控制工业起重机,起重机操作员可以看到正在移动的材料,而不是控制装置

- 可让用户通过预设指令启动操作的机器人控制装置

- 过程控制,例如在需要戴手套且触摸屏无法正常工作的恶劣环境中

- 通过语音交互收集传感器读数和其他测量数据的测量设备

- 技术员工作报告和数据收集

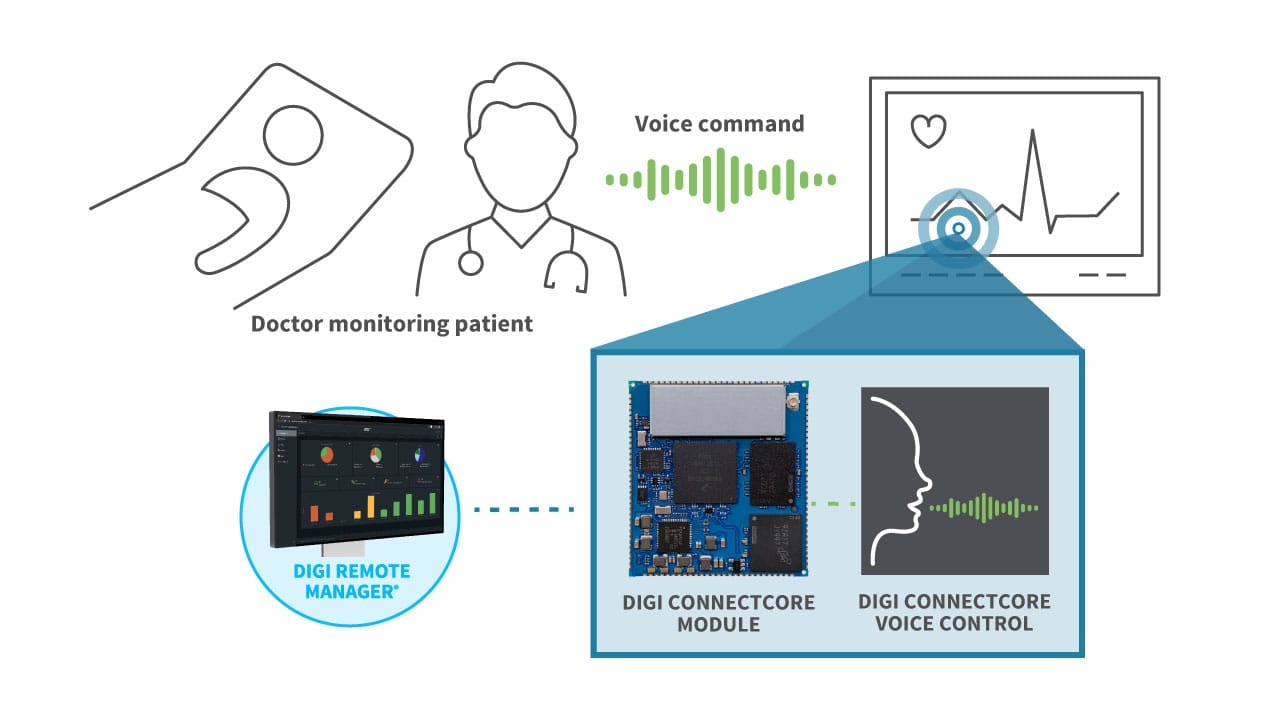

- 医疗和保健

- 手术室设备--与触摸屏或键盘相比,通过语音与设备互动具有方便和卫生的优势

- 家庭保健--护士记录用药、治疗等情况。

- 医院中的医疗语音提示检查单,例如在治疗前为病人做准备/检查

- 临床笔记转录提高效率

为您的下一款产品添加Digi ConnectCore 语音控制功能

对于考虑为其下一款产品提供语音界面(无论是作为当前功能还是作为未来增强功能)的 OEM 开发人员,Digi ConnectCore Voice Control 提供了预集成的即用型软件,可用于在Digi ConnectCore 模块上进行开发。

开发软件可在Digi ConnectCore Voice Control 文档网站上下载。作为下载的一部分,Digi 为已购买Digi ConnectCore 8M Nano 开发套件的客户提供了用于评估和开发的单个软件许可证。(对于部署,OEM 可以从软件供应商或通过 Digi 为其销售的每台设备购买许可证)。该软件下载可用于开发概念验证、演示语音功能以及为客户的新产品设计语音控制应用程序。

要了解更多信息,请下载Digi ConnectCore Voice Control 数据表。

下一步工作